BANG! BANG! TESTING 1, 2, 3….Is this ****ing thing even on?

Oh. Right. Eventually I’ll remember how all this stuff works.

It’s been far too long without any updates – many apologies for that.

I’m being asked (a lot, understandably) if we are ever going to release any more courses at VirtualPairProgrammers.com. Or, indeed, if we are even going to continue. Here’s a full and honest update…but as usual for me it will be a long ramble!

The TLDR; we are continuing VirtualPairProgammers for as long as it doesn’t cost us money to run. That will be at least a year. If we need to close we will implement a “sunsetting” process so that all subscriptions continue to run.

And there will be new courses in 2025. Almost certainly1.

The detail…

As you know we’ve definitely slowed down. I can’t remember when we last released. Don’t keep a subscription open if you’re waiting for new courses because updates will be sporadic. Sadly Matt Greencroft has left the business2 so if you’re a fan of his definitely don’t keep a sub open!

I’m still here. I took a back seat for a long time as I was rebuilding a house. Painful but much easier than building software. I might do some blog posts where I overshare what’s actually been going on. But noone cares. Where’s the courses? I am planning to try to get a release schedule back up and running. My very rough plan is:

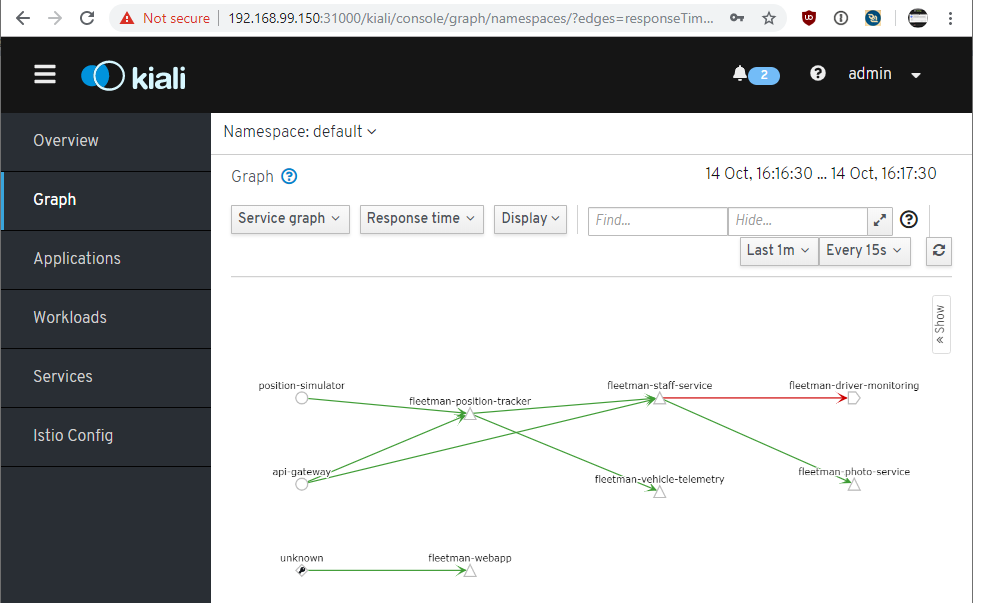

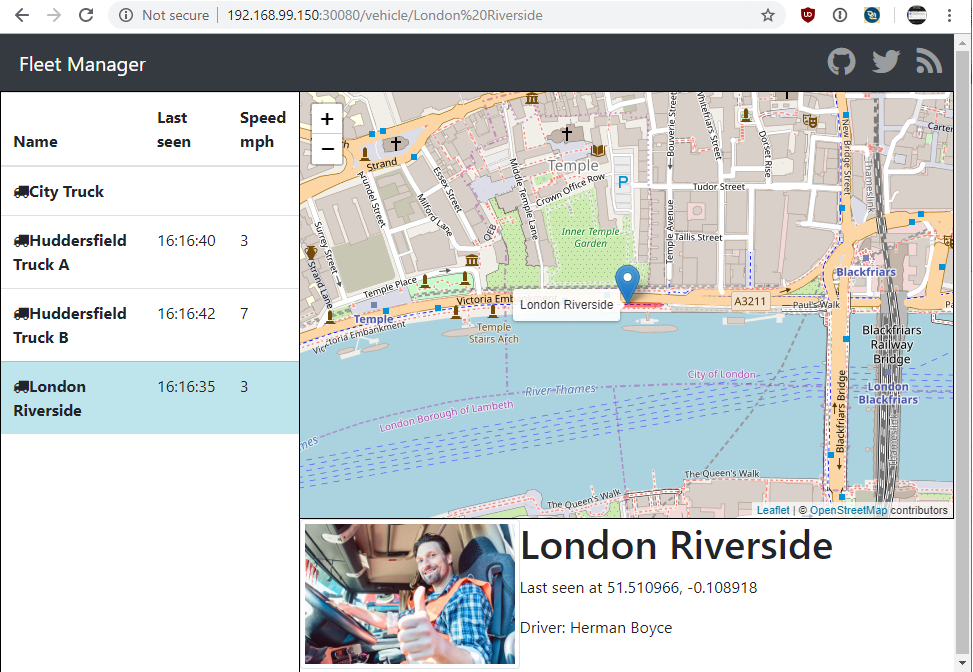

1) a quick release ASAP covering federated authentication of microservices. I’m doing this so I can get back up and running, but also because this is a major omission from the existing courses. This will also lead to an update to the Istio course covering their Auth support. This is minor but it will be good to tick that off.

2) A full course on gitops. Details very much to be confirmed but the usual stuff.

3) A full course on serverless. Perhaps too late for market but I feel we need coverage of this.

All of the above is very much provisional. I’ve actually announced some of the above before but due to business problems I’ve had to postpone them several times. Market conditions as you know are poor so if any of the above fail to sell, it may be necessary to either stop or re-plan.

We won’t be closing the site any time soon (at least a year), and we will undertake a full “sunsetting” process if we do have to close – ie all existing subscriptions will continue to run.

Sorry that the update is a bit bleak – I am going to give it a very good attempt – with “major training platforms” now dominating everything and prices being a race to the bottom, it is a very bad time for creators of online training sadly.

I hope that somehow we manage to provide something of use to you in 2025! All the best!